Complete technical guide to ChatGPT citation optimization. Covers RRF framework, chunk design, schema implementation, and Query Fan-Out—backed by proprietary research.

Continue readingQuery Fan Out in GEO: How Topic Clusters Unlock AI Search Visibility

Query Fan Out is AI search’s secret weapon for expanding single queries into multiple intent-driven searches. Here’s how to align your content strategy with this fundamental shift in how AI systems discover and synthesize information.

Key Insights

- Query Fan Out expands one query into 8-10 related subqueries automatically during AI search processing

- Topic clusters mirror fan-out patterns, making them essential for comprehensive coverage

- GEO differs from SEO by focusing on entity-first rather than keyword-first optimization

- Coverage beats keyword density – answering more anticipated questions matters more than repetition

- Practical optimization requires systematic mapping of entities, subqueries, and content gaps

What is Query Fan Out?

Query Fan Out is Google’s AI-driven technique that expands a single search query into multiple related subqueries to improve retrieval and answer synthesis.

Rather than processing your search as one isolated request, Google’s AI systems automatically generate 8-10 related queries in parallel. These synthetic queries span different intents, formats, and semantic angles to capture what users might be trying to accomplish beyond their exact wording.

How Google AI Mode Uses Fan-Out

The process begins with prompted expansion, where an LLM generates alternate queries from your original search. The system doesn’t create random variations—it follows structured prompts emphasizing:

- Intent diversity (comparative, exploratory, decision-making)

- Lexical variation (synonyms, paraphrasing)

- Entity-based reformulations (specific brands, features, topics)

For example, if you search “best electric SUV,” Google’s fan-out might simultaneously query:

- “top rated electric crossovers”

- “EVs with longest range”

- “Rivian R1S vs Tesla Model X”

- “affordable family EVs”

- “EV SUV comparison chart 2025”

Why Fan-Out Matters in LLM-Driven Search

Query Fan Out represents a fundamental shift from exact-match keyword targeting to semantic expansion. Traditional search engines relied heavily on matching the precise words you typed. AI search systems anticipate the broader information space around your query.

This creates a crucial implication: ranking #1 for your target keyword only gives you a 25% chance of appearing in AI Overviews. Success requires ranking well across multiple subqueries that Google explores in the background.

Common Objection: “Isn’t this just keyword variation?”

Query Fan Out goes beyond synonyms or keyword variations. While traditional SEO might target “car insurance” and “auto insurance” separately, fan-out generates contextually relevant queries like “GEICO vs Progressive comparison chart for new parents”—queries that wouldn’t appear in traditional keyword research but reflect actual user intent.

Query Fan Out vs Classic SEO Keywords

Traditional SEO focused on ranking individual pages for specific keywords. Query Fan Out operates on an entirely different principle: comprehensive intent coverage across related semantic territories.

SEO Keywords vs Fan-Out vs Topic Clusters

| Approach | Focus | Coverage | Strategy | Measurement |

| SEO Keywords | Individual terms | 1-3 variants | Exact/broad match | Keyword rankings |

| Query Fan Out | Semantic expansion | 8-10+ subqueries | Intent diversity | Subquery coverage |

| Topic Clusters | Comprehensive hubs | Full topic space | Hub + spokes | Entity coverage |

The Pitfalls of Keyword-Only Strategies

Relying solely on traditional keyword targeting creates several vulnerabilities in AI search:

- Narrow retrieval scope – AI systems may not surface your content for adjacent intents

- Missed synthetic queries – Fan-out often generates queries you wouldn’t research manually

- Limited answer synthesis – AI prefers sources that address multiple facets of a topic

Micro-CTA: Future-proof your strategy by expanding beyond keywords to comprehensive topic coverage.

Topic Clusters: The Fan-Out Ally

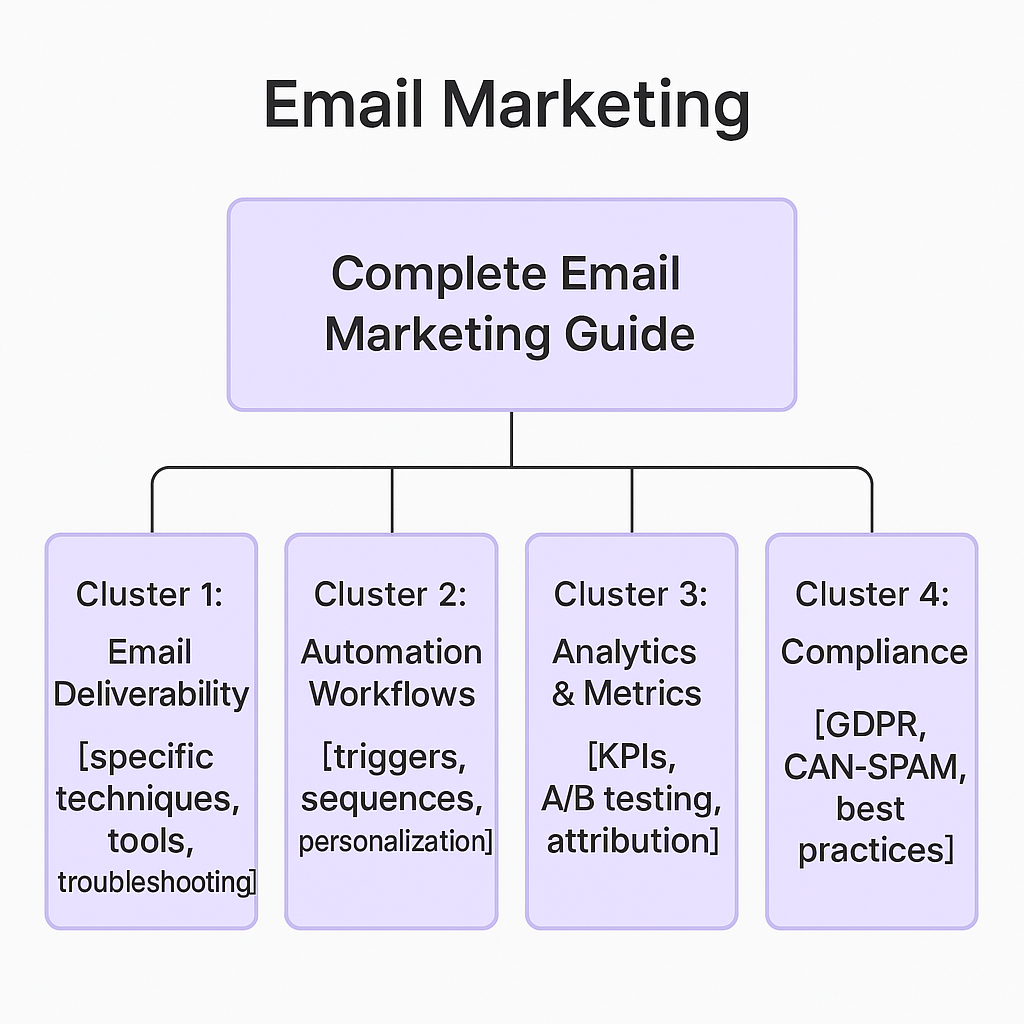

Topic clusters provide the content architecture that naturally aligns with Query Fan Out patterns. Instead of creating isolated pages for individual keywords, clusters organize content around central themes with supporting subtopics—mirroring how AI systems expand queries.

How Clusters Mirror Fan-Out Branching

When Google’s AI encounters your pillar page about “email marketing,” it can simultaneously retrieve information for subqueries like:

- “email deliverability best practices”

- “email automation workflows”

- “email marketing metrics”

- “GDPR compliance for email”

Each cluster page becomes discoverable for its specific subquery while the internal linking reinforces topical relationships.

Query → Cluster → Subnodes Diagram

Main Query: “Email Marketing”

Case Example: How LLMs Expand Queries into Clusters

Consider a user asking “how to improve website speed.” An AI system using Query Fan Out might generate:

Primary Intent: Website performance optimization Synthetic Subqueries:

- “Core Web Vitals improvement techniques”

- “image optimization for web”

- “CDN setup guide”

- “WordPress speed optimization plugins”

- “mobile page speed best practices”

A well-structured topic cluster would have dedicated pages or sections for each subquery, all linked back to a comprehensive pillar page about website speed optimization.

PAA-Style Questions:

- Why are topic clusters important in GEO?

- How do clusters improve AI visibility?

Topic clusters improve AI visibility by providing multiple entry points for retrieval. Instead of competing for a single query, your content becomes discoverable across the entire fan-out space that AI systems explore.

GEO vs SEO: Adapting to AI Search

Generative Engine Optimization (GEO) and Search Engine Optimization (SEO) share common goals—visibility and discovery—but employ fundamentally different strategies for the AI search era.

Overlaps: Visibility and Discovery

Both approaches aim to help users find relevant information when they need it. Quality content, clear structure, and authoritative sources remain important across traditional and AI search surfaces.

Key Differences: Entity-Driven vs Keyword-First

| Aspect | SEO Approach | GEO Approach |

| Primary Focus | Keyword rankings | Entity coverage & citations |

| Content Strategy | Page-level optimization | Chunk-level optimization |

| Success Metrics | Rankings & traffic | Mentions & synthesis |

| Retrieval Model | Exact/semantic match | Multi-query expansion |

| Authority Signals | Links & domain metrics | Cite-worthiness & trust |

Hybrid Strategy Tips

- Maintain SEO foundations while adding GEO layers

- Structure content for both ranking and extraction

- Build topic clusters that serve traditional and AI search

- Monitor performance across both classic SERPs and AI platforms

- Iterate based on coverage gaps rather than just ranking changes

The future isn’t SEO vs GEO—it’s integrated optimization that performs across all discovery surfaces.

How to Align Content with Query Fan Out

Optimizing for Query Fan Out requires systematic mapping of your topic’s semantic territory and strategic content placement across anticipated subqueries.

Step 1: Map Entities + Semantic Clusters

Start by identifying your primary entity and its relationship network:

Primary Entity: Your main topic (e.g., “project management”) Related Entities: Adjacent concepts (methodology, tools, skills, roles) Attributes: Characteristics and properties (agile, remote, budget, timeline) Sub-entities: Specific implementations (Scrum, Kanban, Waterfall)

Use tools like Google’s Knowledge Graph, Wikipedia category pages, and “People Also Ask” to discover semantic relationships.

Step 2: Expand Queries Using PAA + AI Overviews

Analyze current search results to understand how AI systems are already expanding your target queries:

- Search your target keyword and note AI Overview topics

- Collect “People Also Ask” questions for secondary intents

- Query ChatGPT/Claude about your topic and analyze their question patterns

- Review competitor content that ranks for related terms

Document 15-20 anticipated subqueries that AI systems might generate from your main topic.

Step 3: Build Cluster Hubs + Spokes

Create a content architecture that addresses both primary queries and fan-out expansions:

Hub Page (Pillar): Comprehensive overview with sections covering major subqueries Spoke Pages (Clusters): Deep-dive content for specific subqueries that need extensive coverage Internal Linking: Connect spokes to hub and cross-reference related spokes

Each spoke should be optimized for its specific subquery while reinforcing the overall topical authority of your hub.

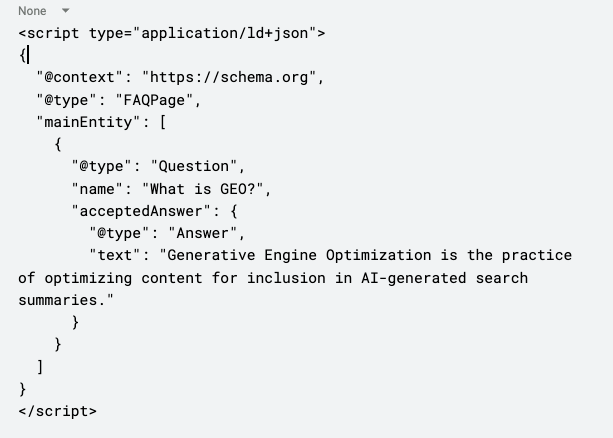

Step 4: Optimize Snippets + Schema Markup

Structure content for easy extraction by AI systems:

- Lead with clear answers (40-60 words for snippet opportunities)

- Use descriptive headings that mirror question patterns

- Include tables and lists for comparative and process content

- Add FAQ schema for commonly asked questions

- Implement Article schema to clarify content structure

Step 5: Maintain Freshness + Updates

AI systems prefer current, accurate information. Establish update cycles for:

- Quarterly content audits to identify coverage gaps

- Monthly fact-checking of statistics and examples

- Seasonal refreshes of time-sensitive information

- Ongoing monitoring of new subqueries emerging in PAA and AI platforms

Checklist: Is Your Content Fan-Out Ready?

Content Structure:

- Clear H2/H3 headings that match question patterns

- Snippet-ready answers within first 60 words of sections

- Tables/lists for comparison and process content

- Internal links to related subtopics

Entity Coverage:

- Primary entity clearly defined

- Related entities and attributes addressed

- Sub-entities covered with specific examples

- Cross-references to authoritative sources

Technical Optimization:

- FAQ schema implemented

- Article schema configured

- Clean URL structure

- Mobile-optimized experience

Tools and Frameworks for Fan-Out Optimization

Several tools can help you identify Query Fan Out patterns and optimize your content coverage systematically.

Query Fan-Out Analysis Tools

Screaming Frog + Gemini Integration Metehan Yesilyurt developed a custom JavaScript integration that analyzes pages for Query Fan Out coverage. The tool:

- Detects primary entities/topics on each page

- Predicts 8-10 subqueries likely generated by AI Mode

- Scores coverage as Yes/Partial/No for each subquery

- Suggests follow-up questions users might ask

Setup considerations: Requires Gemini API key, JavaScript rendering enabled, and careful rate limiting to avoid HTTP 429 errors.

Google Patents + AI Mode Documentation

Understanding the technical foundations helps predict how Query Fan Out will evolve:

- “Systems and methods for prompt-based query generation” details the expansion process

- Google AI Mode documentation explains implementation approaches

- Search quality evaluator guidelines reveal ranking factors for AI results

SEO Clustering Tools

Traditional tools can be adapted for fan-out analysis:

- Surfer SEO: Identifies related topics and questions

- Clearscope: Provides semantic keyword suggestions

- MarketMuse: Maps content gaps across topic clusters

Limitations + Pitfalls of Tools

Over-optimization risks: Focusing solely on fan-out coverage can harm existing rankings if not implemented carefully. Test changes on subsets of content first.

Rate limiting issues: API-based tools often encounter usage limits during large-scale analysis. Plan for incremental crawling and data collection.

False positives: Automated tools may suggest irrelevant subqueries. Human review remains essential for filtering and prioritizing recommendations.

FAQs

What is Query Fan Out?

Query Fan Out is AI search’s technique for expanding single queries into 8-10 related subqueries during content retrieval, enabling more comprehensive answer synthesis.

Why does Query Fan Out matter for SEO/GEO?

Traditional SEO targets individual keywords, but AI systems retrieve content based on multiple subqueries simultaneously. Success requires coverage across the entire fan-out space, not just primary terms.

Is Query Fan Out replacing traditional SEO?

Query Fan Out represents evolution, not replacement. Traditional SEO foundations remain important, but optimization strategies must adapt to include entity coverage and semantic expansion.

How do topic clusters connect to Query Fan Out?

Topic clusters provide natural alignment with fan-out patterns. Hub-and-spoke content architectures mirror how AI systems expand queries into related subtopics and intents.

How do I optimize for Query Fan Out?

Map your topic’s entities and attributes, identify likely subqueries, create cluster content addressing each facet, optimize for snippet extraction, and maintain freshness across all content.

What tools help with Query Fan Out analysis?

Screaming Frog + Gemini integration, Google’s PAA and AI Overviews analysis, semantic SEO tools like Surfer and Clearscope, and manual query expansion through AI assistants.

Can Query Fan Out hurt my existing rankings?

Over-optimization focused solely on fan-out can disrupt existing performance. Implement changes gradually, test on content subsets, and monitor traditional rankings alongside AI visibility.

How is Query Fan Out different from keyword variations?

Keyword variations focus on synonyms and related terms. Query Fan Out generates contextually relevant intents that may not appear in traditional keyword research but reflect actual user goals.

Glossary

Query Fan Out: AI search technique that expands single queries into multiple related subqueries for comprehensive content retrieval

GEO (Generative Engine Optimization): Optimization strategies focused on visibility and citations in AI-generated answers rather than traditional search rankings

Topic Clusters: Content architecture organized around central themes (hubs) with supporting subtopics (spokes), connected through internal linking

Entity: People, places, things, or concepts that search engines can understand and categorize within knowledge graphs

Semantic Expansion: Process of identifying related concepts, synonyms, and contextual variations around core topics

LLM SEO: Search optimization specifically focused on large language model retrieval and synthesis patterns

AI Visibility: Measure of how often content appears in or influences AI-generated answers across platforms

Snippet: Short, extractable content segments optimized for featured snippet selection and AI answer synthesis

PAA (People Also Ask): Google’s related question feature that reveals common query expansions and user intents

TL;DR Summary

- Query Fan Out = AI-driven query expansion that turns single searches into 8-10 related subqueries automatically

- Topic Clusters = structural framework for capturing traffic across the entire fan-out space

- GEO vs SEO: entity-first optimization beats keyword-first approaches in AI search

- Action steps: Map entities + subqueries, build cluster content, optimize for extraction, maintain freshness

Conclusion + Next Steps

The shift from keywords to Query Fan Out represents more than a tactical change—it’s a fundamental evolution in how search systems understand and serve user intent. While traditional SEO focused on matching specific terms, AI search anticipates the broader information space around every query.

The transformation is clear: Success now requires comprehensive coverage across semantic territories rather than narrow keyword dominance. Topic clusters provide the architectural framework to align with this shift, while entity-first optimization ensures your content participates in AI answer synthesis.

GEO vs SEO: Key Differences, Overlaps, and How to Adapt

SEO is still dominant, but generative AI (GEO) is reshaping visibility. Many marketers fear GEO “kills SEO”, the reality is more nuanced. While search engines continue to drive significant traffic, AI-powered tools like ChatGPT, Perplexity, and Google’s AI Overviews are increasingly answering user questions directly. This creates a new challenge: how do SEO and GEO differ, overlap, and work together?

The question isn’t whether to choose between SEO or GEO, it’s how to integrate both strategies to future-proof your visibility across all discovery surfaces.

Generative Engine Optimization – The Next Layer of Search Visibility

What is SEO? What is GEO?

GEO vs SEO in 40 words: SEO optimizes pages for search engine rankings through keywords, backlinks, and technical health. GEO optimizes content to be cited by AI systems like ChatGPT and Perplexity through structured, extractable passages and semantic clarity. Both drive visibility but target different discovery surfaces.

Definition of SEO

SEO (Search Engine Optimization) is the practice of optimizing web pages to rank higher in search engine results pages. The core approach involves:

- Ranking factors: backlinks, domain authority, technical health, and content relevance

- Target surfaces: Google/Bing search results, featured snippets, People Also Ask

- Evolution: From keyword matching to mobile-first indexing and Core Web Vitals

- Success metrics: organic traffic, click-through rates, and conversions

Definition of GEO

GEO (Generative Engine Optimization) focuses on optimizing content so AI systems can chunk, retrieve, and generate answers that cite your content. This emerging field involves:

- Core principle: structuring content for AI retrieval and synthesis

- Target surfaces: ChatGPT responses, Perplexity citations, Google AI Overviews

- Key factors: retrievability, extractability, and trust signals

- Success metrics: citations in AI answers, brand mentions, and retrieval presence

Why This Comparison is Rising Now

Between 2023 and 2025, AI Overview, ChatGPT, and Perplexity have fundamentally shifted how people discover information. Search marketers are questioning budgets: should resources go to traditional SEO or new GEO initiatives?

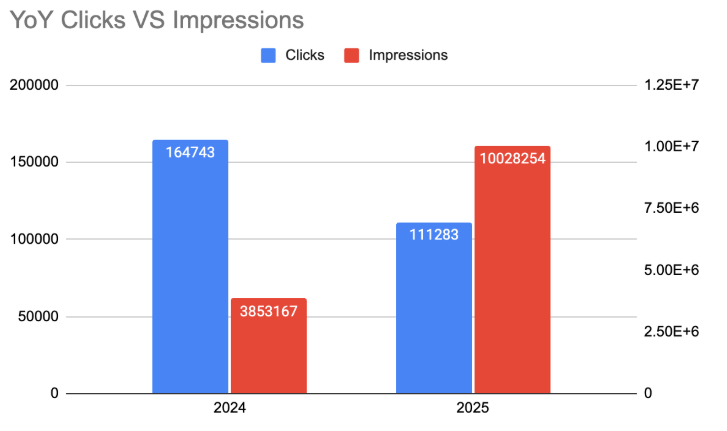

The reality is both will co-exist. According to our analysis, search demand for SEO has actually grown over the past year, while “AI Optimization” has emerged as a trending complementary discipline.

GEO vs SEO: Key Differences

The strategic differences between SEO and GEO become clear when we examine their core mechanics:

| Aspect | SEO | GEO |

| Goal | Rank high in search results | Get cited in AI-generated responses |

| Ranking Factors | Backlinks, authority, technical health | Retrieval cues, structured data, semantic clarity |

| Visibility Surfaces | 10 blue links, snippets, PAA | AI Overview, ChatGPT answers, LLM retrieval |

| User Journey | Click → visit page → convert | Get answer → may visit later or never |

| Content Focus | Optimize full pages (titles, headers, meta) | Create quotable, self-contained passages |

| Success Metrics | Traffic, CTR, conversions | Citations, brand mentions, retrieval presence |

Ranking Factors

SEO relies on established signals: high-quality backlinks from authoritative sites, domain authority built over time, technical excellence (site speed, mobile optimization), and comprehensive content that matches search intent.

GEO operates differently. AI uses “answer relevance” instead of “page authority.” The key factors include:

- Chunk-level optimization: Content broken into semantically tight, self-contained passages

- Entity density: Clear, structured information about specific topics

- Schema markup: Machine-readable structured data

- E-E-A-T signals: Expertise, experience, authoritativeness, and trustworthiness

Visibility Surfaces

SEO targets traditional search surfaces: the classic 10 blue links, featured snippets, People Also Ask boxes, and local search results. Users see your listing, click through to your site, and hopefully convert.

GEO targets generative surfaces where AI synthesizes information from multiple sources into a single response. Your content might be quoted or referenced within an AI-generated answer, with or without direct attribution to your site.

Metrics & KPIs

SEO metrics are well-established: organic traffic from Google Analytics, keyword rankings from tools like SEMrush, and conversion tracking through goal completion or e-commerce data.

GEO requires new measurement approaches: citations in AI answers, brand mentions across AI platforms, and share of voice in AI-generated responses. Tools like SEMrush’s AI SEO Toolkit now track brand visibility and perception in AI responses.

How to Optimize for LLM Search

Where GEO and SEO Overlap

Despite their differences, GEO is built on SEO fundamentals rather than replacing them. The overlap areas provide reassurance for marketers worried about abandoning proven strategies.

Content Structure (Chunking = SEO Readability)

What GEO calls “chunking”, breaking content into semantically coherent passages, directly mirrors SEO-friendly formatting practices.

Clear heading hierarchies (H2/H3), bullet points, and short paragraphs help both Google’s ranking algorithms and GPT’s retrieval systems. A well-structured article with logical subheadings serves both audiences effectively.

Example: A technical guide with clear H2 sections like “What is Technical SEO?” followed by concise explanations works for both traditional search ranking and AI citation.

Schema & Metadata

Structured data helps Google understand your content, and that same schema markup aids LLM retrieval.

FAQPage schema is particularly valuable because it:

- Powers People Also Ask results in traditional search

- Provides clear question-answer pairs for AI systems to extract

- Creates natural opportunities for featured snippets

Authority & Trust Signals

Both SEO and GEO require E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). The difference is in application:

- SEO evaluates authority through backlink profiles and domain strength

- GEO surfaces trusted voices more directly, preferring authoritative content over purely link-driven rankings

In both cases, demonstrating expertise through author bylines, credentials, citations to reputable sources, and consistent brand messaging across platforms builds the authority signals both systems value.

GEO Tactics in Practice

Moving from theory to implementation, here are the specific tactics that improve GEO performance based on our analysis of successful citations:

Chunking & Entity Density

Break text into 200-300 word sections that can stand alone semantically. Each section should cover one clear concept with sufficient context for an AI system to extract and understand it independently.

Reinforce entities naturally throughout your content. If writing about “technical SEO,” include related entities like “crawlability,” “indexability,” “site speed,” and “Core Web Vitals” in contextually appropriate ways.

Add micro-CTAs at section level to guide users who arrive via AI citations to relevant next steps or related content.

FAQ Integration

Insert 5-8 FAQs targeting AI retrieval within your content. Base these on questions users actually ask AI systems about your topic. Examples for GEO content might include:

- “Is GEO replacing SEO?”

- “What are GEO ranking factors?”

- “How do I measure GEO success?”

Structure these as natural language Q&A rather than keyword-stuffed variations.

Snippet Readiness

Add 40-60 word definitions early in your content that directly answer the primary question. This creates opportunities for both featured snippets and AI citations.

Include tables and lists for comparative information. AI systems excel at extracting structured data for synthesis into responses.

Keep language clean for AI parsing by avoiding excessive jargon, overly complex sentences, or promotional language that reduces extractability.

SEO + GEO Together: A Unified Strategy

Rather than choosing between SEO and GEO, successful marketers integrate both approaches based on content type and user intent.

When to Prioritize SEO

Focus primarily on SEO tactics for:

- Classic queries with high commercial intent (“buy X,” “X near me”)

- Transactional content designed to capture purchase-ready users

- Evergreen traffic drivers that consistently bring qualified visitors

When to Optimize for GEO

Prioritize GEO tactics for:

- Emerging concepts where you can establish thought leadership

- Question-based content that users commonly ask AI systems

- Brand queries where you want to control the narrative in AI responses

Integration Playbook

The most effective approach combines both strategies:

- Content structuring benefits both: Well-organized content with clear headings, logical flow, and semantic structure serves traditional search ranking and AI extraction equally well

- KPI layering: Track traditional SEO metrics (traffic, rankings) alongside GEO metrics (citations, brand mentions) to understand your complete visibility picture

- Platform diversification: Create content that works for Google search while also being distributed across forums, social platforms, and industry publications where AI systems might discover it

Future of Search: From SEO → AEO → GEO

Understanding the evolution from SEO to Answer Engine Optimization (AEO) to GEO provides context for what’s coming next.

Brief History of SEO & AEO

SEO began with keyword matching and link building. AEO emerged as Google introduced featured snippets and voice search, requiring optimization for zero-click answers.

Why AI Acceleration Changed Everything

The 2023-2025 period marked a fundamental shift. Instead of displaying ranked results, AI systems began synthesizing information from multiple sources into coherent responses. This created entirely new visibility opportunities and challenges.

What’s Next: Multi-Modal Engines (text + voice + vision)

Future AI systems will process text, voice, and visual content simultaneously. Optimizing for these multi-modal engines will require:

- Visual content optimization with descriptive alt text and structured captions

- Voice-friendly formatting for audio AI assistants

- Cross-modal consistency where written, visual, and audio content reinforce the same messages

Common Questions (FAQ)

What is GEO vs SEO?

GEO optimizes content to be cited by AI systems like ChatGPT through structured, extractable passages. SEO optimizes pages for search engine rankings through keywords and backlinks. Both target visibility but on different surfaces.

Is GEO replacing SEO?

No. GEO builds on SEO principles and both strategies work together. Search engines still drive significant traffic, while AI systems create new citation opportunities. Successful marketers integrate both approaches.

How do GEO tactics overlap with SEO?

Content structure, schema markup, and authority signals benefit both SEO and GEO. Well-organized content with clear headings helps Google ranking and AI extraction. FAQ schema powers both People Also Ask results and AI retrieval.

What is chunking in GEO?

Chunking breaks content into self-contained passages of 200-300 words that AI systems can extract and understand independently. Each chunk should focus on one concept with sufficient context.

How do AI engines rank content?

AI systems prioritize answer relevance over page authority. Key factors include semantic clarity, structured data, entity density, and trustworthiness signals rather than traditional backlink metrics.

Is GEO relevant for small businesses?

Yes. Small businesses can compete effectively in GEO by creating authoritative, well-structured content about their expertise. AI systems often prefer specific, expert content over generic marketing material.

What KPIs measure GEO success?

Track citations in AI responses, brand mentions across AI platforms, share of voice in AI-generated answers, and brand sentiment in AI outputs. Tools like SEMrush’s AI SEO Toolkit provide these metrics.

Does Google use GEO?

Google’s AI Overviews and AI Mode incorporate GEO principles by extracting and synthesizing content from multiple sources. Optimizing for these features requires GEO tactics like chunking and structured data.

Conclusion

GEO is not replacing SEO. Both strategies are essential for comprehensive visibility in 2025 and beyond. While search engines continue driving qualified traffic through traditional ranking factors, AI systems create new opportunities for brand exposure through citations and mentions.

Marketers must integrate both strategies now to stay competitive. The fundamentals remain the same: create authoritative, well-structured content that helps users solve real problems. The difference is ensuring that content works across both traditional search surfaces and emerging AI platforms.

How to Optimize for LLM Search: The Complete 2025 Guide to GEO & AI Visibility

Estimated reading time: 16 minutes

Summary: LLM search optimization (also called Generative Engine Optimization or GEO) is the practice of structuring content so AI models like ChatGPT, Gemini, Claude, and Perplexity can easily find, understand, and cite your content in their responses. This comprehensive guide covers the technical frameworks, content strategies, and measurement approaches needed to win in the age of AI-powered search.

Key Insights

- 58% of consumers now use AI tools for product recommendations (up from 25% in 2023), creating massive new discovery opportunities

- LLM optimization complements traditional SEO—don’t abandon classic tactics, but add AI-specific layers

- Zero-click answers are reshaping traffic patterns—success metrics shift from clicks to citations and brand mentions

- Content structure matters more than keywords—clear headings, FAQ formats, and semantic markup drive AI inclusion

- Authority and freshness trump link volume—LLMs prefer recent, cited content from recognized sources

Why LLM Search Matters Now

The way people discover information has fundamentally shifted. AI-first search platforms like ChatGPT, Google AI Overviews, Perplexity, and Claude are no longer experimental—they’re mainstream discovery channels driving real business outcomes.

The Rise of AI-First Discovery

ChatGPT alone processes over 1 billion user messages daily, while Google’s AI Overviews now appear for millions of queries. More telling: companies like Vercel report that ChatGPT now drives 10% of their new signups (up from 1% six months earlier), and Tally saw AI search become their largest acquisition channel, helping grow from $2M to $3M ARR in four months.

Why Blue Links Are No Longer the Only Path

Traditional search follows a predictable pattern: query → ranked results → click → consume. AI search changes this to: query → synthesized answer → optional click. This “answer-first” approach means your content might be seen, cited, and acted upon without users ever visiting your site.

Business Risk of Ignoring LLM Optimization

Gartner predicts 50% of search engine traffic will shift to AI platforms by 2028. Early data shows organic traffic declining 15-25% for brands unprepared for this transition. Companies that don’t adapt risk becoming invisible in the channels where their customers increasingly search.

Generative Engine Optimization (GEO) as the Next Layer

GEO extends traditional SEO principles into the AI era. Instead of optimizing solely for search engine ranking algorithms, you’re also optimizing for LLM retrieval and synthesis systems. The goal: ensure your content is not just findable, but citable, trustworthy, and contextually relevant when AI models generate answers.

What is LLM Optimization?

Definition: LLM optimization is the strategic process of structuring content, building authority, and implementing technical elements so that large language models can easily discover, understand, and cite your content when generating responses to user queries.

Core Difference Between LLM Optimization, GEO, AEO, and SEO

| Aspect | Traditional SEO | LLM Optimization (LLMO) | GEO | AEO |

| Primary Goal | Rank in search results | Get cited in AI responses | Optimize for generative engines | Optimize for answer engines |

| Success Metric | Rankings, traffic, CTR | Citations, mentions, inclusion | Brand visibility in AI answers | Share of voice in answers |

| Optimization Target | Pages and keywords | Content chunks and entities | Complete content ecosystems | Direct answer formats |

| Content Focus | Keyword density, backlinks | Semantic clarity, structure | Topic authority, freshness | Concise, factual responses |

| Technical Requirements | Meta tags, schema | Structured data, clean HTML | Entity markup, relationships | Featured snippet optimization |

How LLM Optimization Works

LLMs use Retrieval-Augmented Generation (RAG) to answer queries:

- Query Understanding: User’s question is analyzed for intent and context

- Content Retrieval: Relevant content chunks are retrieved from indexed sources

- Relevance Scoring: Retrieved content is ranked by relevance, authority, and freshness

- Answer Synthesis: Top-scoring content is synthesized into a coherent response

- Citation Assignment: Sources are attributed based on contribution to the answer

Your content enters this pipeline during the retrieval phase. To be selected for synthesis, it must be semantically relevant, structurally clear, and authoritative.

Common Questions About LLM Optimization

“Is GEO the same as SEO?” No. SEO optimizes for search engine algorithms; GEO optimizes for AI model training and retrieval systems. However, they complement each other—good SEO practices often improve GEO performance.

“What does LLM optimization involve?” It involves content structuring (clear headings, FAQ formats), authority building (citations, author credentials), technical optimization (schema markup, clean HTML), and entity consistency (brand mentions, topic coverage).

How LLMs Affect Search Results

Understanding how LLMs retrieve, rank, and cite content is crucial for optimization success. Unlike traditional search engines that match keywords and analyze backlinks, LLMs operate through semantic understanding and pattern recognition.

How LLMs Retrieve, Rank, and Cite Content

Retrieval Process

- Content is broken into semantic chunks (typically 150-300 tokens)

- User queries are converted to embedding vectors representing meaning

- Retrieval systems find chunks with semantic similarity to the query

- Hybrid pipelines combine keyword matching with semantic search for precision

Ranking Factors:

- Semantic relevance: How well content matches query intent

- Authority signals: Author credentials, domain trust, citation count

- Freshness: Recent publication or update dates

- Clarity: Well-structured, scannable content with clear headings

- Completeness: Comprehensive coverage of the topic

Citation Decisions:

- Factual density: Content with specific, verifiable claims

- Source credibility: Recognized authors, institutions, or brands

- Uniqueness: Original data, research, or insights not found elsewhere

Why Some Sites Appear, Others Don’t

Sites that get cited typically have:

- Moderate domain authority (DR 25-35+ range)

- Diverse referring domains (300+ unique sites linking)

- Branded anchor text concentration (brand name in link anchors)

- Clean technical structure (proper HTML, schema markup)

- Regular content updates (fresh timestamps, current information)

Sites that get ignored often suffer from:

- Extremely low authority (under DR 20, few referring domains)

- Poor content structure (no headings, text buried in JavaScript)

- Promotional focus (sales-heavy language, no substantive information)

- Outdated information (stale content, missing publication dates)

Common Misconceptions

“Google still rules everything”: While Google maintains 90% search market share, AI interfaces are changing how results are presented. Google’s own AI Overviews appear before traditional blue links, meaning LLM optimization affects Google visibility too.

“Keywords don’t matter for AI”: Keywords still matter, but semantic meaning matters more. LLMs understand synonyms, context, and intent—stuffing exact-match keywords won’t help if your content lacks semantic depth.

Mini Case Example: LLM Retrieval in Action

When someone asks ChatGPT “What are the best project management tools?”, the system:

- Retrieves tokens (some say chunks) from software review sites, vendor documentation, and user forums

- Ranks based on content freshness, citation authority, and semantic relevance

- Synthesizes a response mentioning 3-5 tools with brief descriptions

- Cites sources that contributed specific claims or data points

Sites like Zapier, NerdWallet, Reddit, Wikipedia, G2, Techradar and Forbes frequently get cited because they provide structured comparisons with specific features and pricing data—exactly what LLMs need to build comprehensive answers.

LLM Optimization vs Traditional SEO

While LLM optimization and traditional SEO share foundational principles, their execution differs significantly. Understanding these differences helps you adapt your strategy without abandoning proven tactics.

Key Differences in Approach

Traditional SEO Priorities:

- Page-level optimization: Title tags, meta descriptions, keyword density

- Link building: Domain authority, backlink profiles, anchor text diversity

- Technical structure: Site speed, mobile-friendliness, crawlability

- Content volume: Publishing frequency, comprehensive topic coverage

LLM Optimization Priorities:

- Chunk-level optimization: Semantic clarity, extractable content blocks

- Entity building: Brand mentions, topical authority, citation networks

- Content structure: FAQ formats, clear headings, scannable sections

- Content quality: Factual accuracy, unique insights, authoritative sources

Schema-First vs Keyword-First Optimization

Keyword-First (Traditional SEO):

- Target specific search terms and variations

- Optimize content density and placement

- Build topic clusters around keyword themes

Schema-First (LLM Optimization):

- Structure content with semantic markup

- Use FAQ, HowTo, and Article schema

- Focus on entity relationships and context

| Traditional SEO Tactic | LLM Optimization Equivalent |

| Keyword research | Intent and entity mapping |

| Title tag optimization | H1/H2 semantic clarity |

| Meta description | Content summaries and key takeaways |

| Internal linking | Entity relationship building |

| Backlink building | Citation and mention acquisition |

Objection Handling: “Does SEO Still Matter?”

Yes, traditional SEO remains crucial. Many LLM systems use traditional search infrastructure for content retrieval. Google’s AI Overviews, for example, often pull from high-ranking organic results.

The key insight: LLM optimization amplifies good SEO practices rather than replacing them. Sites with strong domain authority, clean technical structure, and quality content have advantages in both traditional and AI search.

Best approach: Maintain your existing SEO foundation while adding AI-specific optimizations like structured data, content chunking, and entity consistency.

Technical Checklist for LLM Optimization

This actionable framework ensures your content meets both technical and content requirements for AI visibility. Focus on the highest-impact items first, then work through the complete list systematically.

Crawlability & Indexation

Essential Requirements:

- ✅ Clean robots.txt – Allow AI crawlers (GPTBot, ChatGPT-User, etc.)

- ✅ XML sitemap – Include all important pages with accurate lastmod dates

- ✅ Proper URL structure – Descriptive URLs, avoid dynamic parameters

- ✅ Fast loading – Core Web Vitals in green, under 2.5s LCP

AI-Specific Considerations:

- JavaScript rendering: Ensure content is accessible without heavy JS execution

- Clean HTML structure: Use semantic elements (article, section, aside)

- Avoid content hiding: No accordion content that requires interaction to view

- Mobile optimization: AI crawlers often use mobile user agents

Schema Markup Implementation

Priority Schema Types:

- FAQPage Schema: For Q&A sections (highest impact for AI retrieval)

- Article Schema: With author, publishDate, and modifiedDate

- Organization Schema: Brand entity information

- HowTo Schema: For procedural content

- Product/Service Schema: For commercial content

FAQPage JSON-LD Example:

{

“@context”: “https://schema.org”,

“@type”: “FAQPage”,

“mainEntity”: [{

“@type”: “Question”,

“name”: “What is LLM optimization?”,

“acceptedAnswer”: {

“@type”: “Answer”,

“text”: “LLM optimization is the process of structuring content so AI models can easily find, understand, and cite it in responses.”

}

}]

}

Content Structure Requirements

Heading Hierarchy:

- ✅ One H1 per page with primary topic

- ✅ Logical H2/H3 structure matching content flow

- ✅ Question-based headings where appropriate (“What is X?”, “How to Y?”)

Content Formatting:

- ✅ Summary sections – Lead with key takeaways

- ✅ Scannable paragraphs – 2-3 sentences maximum

- ✅ Bulleted lists – Break complex information into digestible points

- ✅ Data tables – Use HTML tables, not images, for data presentation

Content Chunking Guidelines:

- One concept per section with clear H2/H3

- 150-220 words per chunk optimal for AI extraction

- Lead with the answer then provide supporting detail

- Include supporting data with sources and timestamps

Authority Signals Implementation

Author and Expertise Signals:

- ✅ Author bylines with credentials and experience

- ✅ Author schema markup with sameAs links to profiles

- ✅ Organization schema with legal name, logo, and social profiles

- ✅ Publication dates – Both published and modified timestamps

Citation and Source Requirements:

- ✅ External citations – Link to authoritative sources for claims

- ✅ Data sourcing – Include survey methodology, sample sizes, dates

- ✅ Original research – Highlight unique insights and findings

- ✅ Regular updates – Refresh statistics and add current examples

User Experience Optimization

Technical Performance:

- ✅ Page speed – Sub-2.5s loading times

- ✅ Mobile responsiveness – Proper viewport configuration

- ✅ Clear navigation – Logical site structure and breadcrumbs

- ✅ Accessibility – Alt text, proper contrast ratios, semantic markup

Content Accessibility:

- ✅ Descriptive alt text – Include topic context in image descriptions

- ✅ Table headers – Proper th/td markup for data tables

- ✅ Link context – Descriptive anchor text beyond “click here”

Common Pitfalls to Avoid

Technical Pitfalls:

- Keyword stuffing – LLMs penalize unnatural language

- Missing schema – Reduces content understanding and extraction

- JavaScript-dependent content – May not be accessible to AI crawlers

- Image-based text – Use HTML text instead of text in images

Content Pitfalls:

- Promotional language – Focus on helpful information over sales copy

- Outdated information – Maintain current statistics and examples

- Unclear scope – Always specify timeframes, conditions, and context

- Missing citations – Back up claims with credible sources

Content Templates & Examples for LLM Optimization

Effective LLM optimization requires content formats that AI models can easily extract and synthesize. These templates provide structured approaches for creating AI-friendly content while maintaining reader value.

FAQ Content Template

Structure: Question as H3, direct answer first, then elaboration

Example:

### What is the difference between GEO and traditional SEO?

**Summary**: GEO (Generative Engine Optimization) optimizes content for AI model retrieval and citation, while traditional SEO focuses on search engine ranking algorithms.

GEO emphasizes structured content, entity relationships, and semantic clarity to help AI models understand and cite information. Traditional SEO prioritizes keywords, backlinks, and technical factors to improve search rankings. Both approaches complement each other in modern search strategies.

**Key differences**:

– **Goal**: GEO targets AI citations vs SEO targets rankings

– **Metrics**: GEO measures mentions vs SEO measures traffic

– **Content**: GEO emphasizes structure vs SEO emphasizes keywords

Glossary Template

Purpose: Provide clear definitions for technical terms and concepts

Structure: Term, concise definition, context, related terms

Example:

## LLM Optimization Glossary

**Generative Engine Optimization (GEO)**: The practice of optimizing content for AI-powered search engines that generate answers rather than display ranked links.

**Large Language Model (LLM)**: AI systems like ChatGPT, Gemini, and Claude that can understand and generate human-like text responses.

**Retrieval-Augmented Generation (RAG)**: A technique that combines AI text generation with real-time information retrieval from external sources.

Step-by-Step Guide Schema

HowTo Schema Implementation: Use structured markup for procedural content

Example:

<div itemscope itemtype=”https://schema.org/HowTo”>

<h2 itemprop=”name”>How to Optimize Content for LLM Citations</h2>

<div itemprop=”step” itemscope itemtype=”https://schema.org/HowToStep”>

<h3 itemprop=”name”>Step 1: Structure Your Content</h3>

<div itemprop=”text”>

Use clear headings, bullet points, and FAQ formats to make content easily extractable by AI models.

</div>

</div>

<div itemprop=”step” itemscope itemtype=”https://schema.org/HowToStep”>

<h3 itemprop=”name”>Step 2: Add Schema Markup</h3>

<div itemprop=”text”>

Implement FAQPage, Article, and Organization schema to help AI models understand your content structure.

</div>

</div>

</div>

Comparison Table Template

Purpose: Present structured comparisons that AI models can easily extract

| Feature | Traditional SEO | LLM Optimization |

| Primary Focus | Rankings and traffic | Citations and mentions |

| Content Goal | Keyword targeting | Semantic clarity |

| Success Metrics | SERP position, CTR | Brand mentions in AI responses |

| Technical Requirements | Meta tags, sitemap | Schema markup, structured content |

| Content Format | Keyword-optimized pages | Chunk-friendly sections |

Author Bio Template

Purpose: Establish expertise and authority for E-E-A-T signals

Example:

<div itemscope itemtype=”https://schema.org/Person”>

<img itemprop=”image” src=”author-photo.jpg” alt=”Jane Smith, SEO Consultant”>

<h3 itemprop=”name”>Jane Smith</h3>

<p itemprop=”jobTitle”>Senior SEO Strategist</p>

<p itemprop=”description”>

Jane has 12 years of experience in technical SEO and AI optimization,

helping companies like <span itemprop=”worksFor”>TechCorp</span> improve

their search visibility. She holds certifications in Google Analytics

and has spoken at 15+ industry conferences.

</p>

<a itemprop=”sameAs” href=”https://linkedin.com/in/janesmith”>LinkedIn</a>

<a itemprop=”sameAs” href=”https://twitter.com/janesmith”>Twitter</a>

</div>

Walkthrough: Applying the FAQ Template

- Identify common questions your audience asks about your topic

- Structure each question as an H3 heading

- Lead with a direct answer in the first sentence

- Add supporting detail in subsequent paragraphs

- Include FAQPage schema to help AI models understand the Q&A format

- Link to related content for deeper exploration

Download our complete template library: [Internal link: LLM Content Templates Pack]

Best Tools for LLM Optimization

Effective LLM optimization requires specialized tools for monitoring AI citations, validating schema markup, and tracking brand mentions across AI platforms. Here are the essential tools for 2025.

Tools for Monitoring LLM Citations

ChatGPT Rank Tracker (Morningscore) – Tracks brand mentions and citations in ChatGPT responses

- Best for: Monitoring ChatGPT visibility and prompt-based tracking

- Pricing: Included in all Morningscore plans

- Key features: Prompt simulation, citation tracking, competitor analysis

Profound (Answer Engine Optimization) – Comprehensive AI search visibility platform

- Best for: Cross-platform AI mention tracking (ChatGPT, Gemini, Perplexity)

- Features: Brand mention analysis, sentiment tracking, competitor benchmarking

- Use case: Enterprise-level AI search optimization

Brand monitoring approach: Set up Google Alerts for “[Your Brand] + AI” or “[Your Brand] + ChatGPT” to catch informal mentions

Schema and Structured Data Validators

Google’s Rich Results Test – Validates schema markup for Google compatibility

- URL: search.google.com/test/rich-results

- Best for: Testing FAQ, Article, and Organization schema

- Free: Yes, essential for basic validation

Schema.org Markup Validator – Comprehensive schema validation

- Best for: Detailed schema debugging and optimization

- Supports: All schema.org types including emerging AI-specific markup

Technical SEO Tools with Schema Support:

- Screaming Frog: Crawl and audit schema implementation

- Sitebulb: Visual schema analysis and optimization suggestions

- DeepCrawl: Enterprise schema monitoring and validation

AI Search Monitoring Dashboards

Custom Google Analytics 4 Setup:

- Track AI referrer traffic (chatgpt.com, perplexity.ai, etc.)

- Set up brand mention alerts using UTM parameters

- Monitor zero-click behavior patterns from AI sources

Search Console Integration:

- Monitor queries that trigger AI Overviews

- Track featured snippet performance (often cited by AI)

- Analyze CTR changes from AI search integration

ROI Calculators and Analytics

LLM Optimization ROI Calculator:

Estimated Monthly AI Search Volume: [X]

Current Brand Mention Rate: [Y]%

Average Customer Value: $[Z]

Potential Monthly Impact: X × (Y/100) × Z × Conversion Rate

Key Metrics to Track:

- Brand mention frequency in AI responses

- Citation attribution rate (how often you’re cited as a source)

- AI-referred traffic quality and conversion rates

- Share of voice vs. competitors in AI responses

Implementation Priority

- Start with free tools: Google’s validators and basic GA4 tracking

- Add monitoring: Set up brand mention alerts and basic AI traffic tracking

- Invest in platforms: Consider Profound or similar for comprehensive monitoring

- Scale with enterprise tools: Implement advanced schema auditing and tracking

Case Studies & Early Tests

Real-world examples demonstrate the tangible impact of LLM optimization strategies. These case studies show both successes and failures, providing practical insights for implementation.

Vercel’s AI Search Success

Challenge: Vercel needed to maintain developer tool visibility as search shifted to AI platforms.

Strategy:

- Concept ownership: Created comprehensive documentation covering Next.js, React, and deployment topics

- Structured content: Implemented clear headings, code examples, and FAQ sections

- Community engagement: Active presence in developer forums and GitHub discussions

Results:

- ChatGPT referrals: Grew from 1% to 10% of new signups in six months

- AI citation frequency: Became the most-cited source for Next.js questions

- Traffic quality: AI-referred users showed higher engagement and conversion rates

Key takeaway: Technical depth and clear documentation win in AI search for developer tools.

B2B SaaS Platform Case Study

Situation: Project management software company with strong traditional SEO but low AI visibility.

Implementation:

- Content restructuring: Converted long-form blog posts into FAQ-format sections

- Schema implementation: Added FAQPage markup to all help documentation

- Authority building: Published original research on remote work productivity

Timeline: 90-day implementation period

Results:

- AI mentions: 340% increase in brand mentions across ChatGPT and Perplexity

- Citation quality: Became go-to source for project management statistics

- Traffic impact: 15% increase in qualified leads from AI-referred traffic

Tally’s Growth Acceleration

Background: Form builder platform leveraged AI search to accelerate from $2M to $3M ARR.

Tactics:

- Question-focused content: Created comprehensive guides answering “how to build forms for X”

- Community presence: Active participation in relevant Reddit communities

- Regular updates: Maintained fresh examples and current feature documentation

Outcome: AI search became their largest acquisition channel within four months.

Industry Research: Search Engine Land Analysis

Study scope: Analysis of 5,000 HR and workforce management keywords across AI platforms.

Key findings:

- Top-of-funnel queries: Showed 60% shift toward AI-generated answers

- Citation patterns: Wikipedia, Reddit, and Forbes dominated across most topics

- Commercial queries: Traditional search remained stronger for bottom-funnel terms

Implications for strategy:

- Focus AI optimization on educational and informational content

- Maintain traditional SEO for commercial and transactional queries

- Build presence on community platforms like Reddit for organic mentions

Failed Experiments and Lessons Learned

Over-optimization attempt: One e-commerce site added excessive schema markup and FAQ sections to product pages.

- Result: No improvement in AI visibility, decreased traditional search performance

- Lesson: Balance AI optimization with user experience and traditional SEO

Keyword stuffing for AI: A content site tried using exact AI prompt language throughout articles.

- Result: Unnatural content that performed poorly across all channels

- Lesson: Write for humans first, then optimize for AI understanding

What Worked vs What Failed

Successful strategies:

- Clear, structured content with logical heading hierarchy

- Original data and research that provides unique value

- Regular content updates with fresh examples and statistics

- Community engagement building natural brand mentions

Failed approaches:

- Keyword stuffing with AI-style language

- Over-technical optimization at the expense of readability

- Ignoring traditional SEO in favor of AI-only tactics

- Promotional content without substantive information value

Advanced Use Cases

LLM optimization strategies vary significantly across industries and business models. These advanced implementations show how to adapt core principles for specific contexts and technical requirements.

Ecommerce: Structured Data for Products

Product Schema Optimization:

{

“@context”: “https://schema.org/”,

“@type”: “Product”,

“name”: “Wireless Bluetooth Headphones”,

“description”: “Premium noise-canceling headphones with 30-hour battery life”,

“brand”: {

“@type”: “Brand”,

“name”: “AudioTech”

},

“offers”: {

“@type”: “Offer”,

“price”: “199.99”,

“priceCurrency”: “USD”,

“availability”: “https://schema.org/InStock”

},

“aggregateRating”: {

“@type”: “AggregateRating”,

“ratingValue”: “4.8”,

“reviewCount”: “1247”

}

}

Ecommerce Content Strategy:

- Buying guides: Create comprehensive guides for product categories

- Comparison tables: Structure feature comparisons with clear HTML tables

- FAQ sections: Address common purchase questions and concerns

- Review summaries: Aggregate customer feedback into key insights

AI Shopping Integration:

- Optimize product descriptions for voice search queries

- Include size guides, compatibility information, and use cases

- Structure shipping and return policies for easy AI extraction

Local SEO: Citations & Reviews for LLM Retrieval

LocalBusiness Schema Requirements:

{

“@context”: “https://schema.org”,

“@type”: “LocalBusiness”,

“name”: “Downtown Dental Clinic”,

“address”: {

“@type”: “PostalAddress”,

“streetAddress”: “123 Main Street”,

“addressLocality”: “Austin”,

“addressRegion”: “TX”,

“postalCode”: “78701”

},

“telephone”: “+1-512-555-0123”,

“openingHours”: “Mo-Fr 08:00-17:00”,

“geo”: {

“@type”: “GeoCoordinates”,

“latitude”: 30.2672,

“longitude”: -97.7431

}

}

Local Content Optimization:

- Location-specific FAQs: “What dental services are available in Austin?”

- Service area pages: Optimize for “[service] near me” queries

- Local citations: Ensure consistent NAP (Name, Address, Phone) across directories

- Review response strategy: Engage with reviews to build topical authority

Community Presence Building:

- Local forum participation: Engage in city-specific Reddit communities

- Google Business Profile optimization: Regular posts, photos, and Q&A responses

- Local media mentions: Build relationships with local news and business publications

Developer Integration: APIs, Embeddings, and Structured Metadata

Technical Documentation Optimization:

- Code examples: Provide complete, working code samples

- API endpoint documentation: Structure with clear parameters and responses

- Integration guides: Step-by-step tutorials with expected outcomes

- Error handling: Document common issues and solutions

Developer-Focused Schema:

{

“@context”: “https://schema.org”,

“@type”: “SoftwareApplication”,

“name”: “Payment Processing API”,

“applicationCategory”: “DeveloperApplication”,

“operatingSystem”: “Web”,

“offers”: {

“@type”: “Offer”,

“price”: “0”,

“priceCurrency”: “USD”

},

“featureList”: [“REST API”, “Webhook support”, “Multi-currency”]

}

Technical Content Strategy:

- GitHub documentation: Maintain comprehensive README files and wikis

- Stack Overflow presence: Answer questions related to your tools and APIs

- Technical blog posts: Deep-dive tutorials and best practices

ROI & Measurement for LLM Optimization

Measuring LLM optimization success requires new metrics and tracking approaches. Traditional SEO KPIs like rankings and click-through rates don’t capture the full value of AI-powered visibility.

Metrics to Track: LLM Citations, Traffic, and Conversions

Primary LLM Metrics:

- Brand mention frequency: How often your brand appears in AI responses

- Citation attribution rate: Percentage of mentions that include source attribution

- Share of voice: Your brand’s presence vs. competitors in AI responses

- AI-referred traffic: Visitors coming from AI platforms (chatgpt.com, perplexity.ai)

Traffic Quality Indicators:

- Engagement metrics: Time on site, pages per session from AI sources

- Conversion rates: Lead generation and sales from AI-referred traffic

- Content consumption: Which pages AI visitors engage with most

- Return visitor rate: How often AI-discovered users return directly

Technical Performance Metrics:

- Schema implementation coverage: Percentage of pages with proper markup

- Content chunk optimization: How many sections meet AI-friendly formatting

- Entity consistency score: Brand mention alignment across properties

- Content freshness index: Percentage of content updated in last 6 months

ROI Calculation Framework

LLM Optimization ROI Formula:

Monthly AI Search Volume × Brand Mention Rate × Average Customer Value × Conversion Rate = Monthly AI Revenue Impact

Example Calculation:

10,000 searches × 15% mention rate × $500 customer value × 3% conversion = $2,250 monthly impact

Cost Factors to Include:

- Content creation and optimization time

- Schema implementation and technical work

- Monitoring tools and platform subscriptions

- Community engagement and PR efforts

Payback Period Analysis:

- Immediate impact: Traffic and lead generation within 30-60 days

- Medium-term gains: Brand authority building over 6-12 months

- Long-term value: Sustained competitive advantages and market share

ROI Calculator Implementation

Interactive ROI Calculator Variables:

- Current monthly search volume for target topics

- Estimated AI search adoption rate in your industry

- Average customer lifetime value

- Current organic conversion rates

- Planned investment in LLM optimization

Benchmark Data for Estimates [Source: Most cited domains in llms, 2025]:

- B2B SaaS: 8-15% AI mention rates for established brands

- E-commerce: 5-12% product mention rates in shopping queries

- Local services: 20-35% mention rates for location-specific queries

- Content publishers: 10-25% citation rates for informational content

Monthly Tracking Dashboard Setup:

Key Performance Indicators:

□ AI platform referral traffic (GA4 source tracking)

□ Brand mention tracking (manual or automated monitoring)

□ Citation quality score (attributed vs. non-attributed mentions)

□ Content performance in AI responses (topic coverage analysis)

□ Competitive share of voice (brand vs. competitor mention rates)

Troubleshooting Poor LLM Retrieval

When your content isn’t appearing in AI responses despite optimization efforts, systematic troubleshooting helps identify and resolve the underlying issues.

Why Content Isn’t Being Cited

Technical Barriers:

- JavaScript-dependent content: AI crawlers may not execute complex JavaScript

- Poor HTML structure: Missing semantic elements and proper heading hierarchy

- Blocked crawlers: Robots.txt or server configurations preventing AI bot access

- Slow loading: Pages that timeout during crawling get ignored

Content Quality Issues:

- Promotional language: Sales-heavy content without substantive information

- Outdated information: Stale content with old statistics and examples

- Unclear scope: Missing context about timeframes, conditions, or applicability

- No supporting evidence: Claims without citations or verification sources

Authority Problems:

- Missing author information: No bylines, credentials, or expertise signals

- Weak domain authority: Insufficient backlinks and domain recognition

- Inconsistent branding: Misaligned entity mentions across properties

- Poor citation practices: Failing to link to authoritative sources

Fixes: Schema Errors, Weak Authority, and Formatting

Schema Implementation Fixes:

<!– Before: Broken FAQ Schema –>

<div class=”faq-item”>

<h3>What is LLM optimization?</h3>

<p>It’s about making content AI-friendly.</p>

</div>

<!– After: Proper FAQPage Schema –>

<div itemscope itemtype=”https://schema.org/FAQPage”>

<div itemscope itemtype=”https://schema.org/Question” itemprop=”mainEntity”>

<h3 itemprop=”name”>What is LLM optimization?</h3>

<div itemscope itemtype=”https://schema.org/Answer” itemprop=”acceptedAnswer”>

<div itemprop=”text”>

LLM optimization is the practice of structuring content so AI models

can easily find, understand, and cite it in their responses.

</div>

</div>

</div>

</div>

Authority Building Actions:

- Add author bios: Include credentials, experience, and contact information

- Implement author schema: Link to social profiles and professional pages

- Update publication dates: Show content freshness with visible timestamps

- Cite external sources: Link to authoritative references and data sources

- Build topic clusters: Create comprehensive coverage of related subjects

Content Formatting Improvements:

- Lead with summaries: Start sections with key takeaways and direct answers

- Use clear headings: Structure content with descriptive H2/H3 elements

- Break into chunks: Keep sections to 150-220 words for optimal extraction

- Add supporting data: Include specific statistics, examples, and case studies

Pro Tips for Faster Retrievability

Content Optimization Shortcuts:

- FAQ conversion: Transform existing content into question-answer format

- Table creation: Convert lists and comparisons into HTML tables

- Summary addition: Add “Key Points” or “TL;DR” sections to long content

- Citation audit: Ensure all factual claims link to credible sources

Technical Quick Wins:

- Schema validation: Use Google’s Rich Results Test on all key pages

- Loading speed: Optimize images and eliminate render-blocking resources

- Mobile optimization: Ensure content displays properly on mobile devices

- Clean URLs: Use descriptive, keyword-rich URL structures

Authority Building Accelerators:

- Guest posting: Publish on recognized industry publications

- Data creation: Conduct surveys or research to generate citable statistics

- Expert quotes: Include interviews and insights from recognized authorities

- Community engagement: Participate in relevant forums and discussion platforms

Monitoring and Iteration:

- Set up monthly content audits to identify underperforming pages

- Track AI mention changes after implementing fixes

- Monitor competitor citations to identify content gaps

- A/B testing: Try different content formats and structures

Book a professional LLM audit: [Internal link: AI Optimization Audit Service] to get personalized recommendations for improving your AI search visibility.

How to Get Started – Audit & Next Steps

Beginning your LLM optimization journey requires a systematic approach that builds on your existing SEO foundation while adding AI-specific elements.

Quick-Start Checklist

Week 1: Foundation Assessment

- [ ] Audit current AI visibility: Search for your brand in ChatGPT, Gemini, and Perplexity

- [ ] Check schema implementation: Use Google’s Rich Results Test on 10 key pages

- [ ] Review content structure: Identify pages lacking clear headings and FAQ formats

- [ ] Assess author information: Ensure bylines and credentials are visible

- [ ] Monitor brand mentions: Set up Google Alerts for “[Brand] + AI” searches

Week 2: Technical Implementation

- [ ] Add FAQPage schema to top 5 most important pages

- [ ] Implement Article schema with author and organization information

- [ ] Create llms.txt file with structured resource inventory

- [ ] Optimize page loading speed for AI crawler accessibility

- [ ] Update robots.txt to allow AI crawlers (GPTBot, ChatGPT-User)

Week 3: Content Optimization

- [ ] Convert top pages to FAQ format with question-based headings

- [ ] Add summary sections with key takeaways at the beginning

- [ ] Include publication dates and author credentials prominently

- [ ] Create comparison tables for product/service pages

- [ ] Link to authoritative sources for all factual claims

Week 4: Monitoring Setup

- [ ] Configure GA4 tracking for AI referrer traffic sources

- [ ] Set up brand mention alerts using Google Alerts and social listening

- [ ] Create tracking spreadsheet for monthly AI visibility assessment

- [ ] Establish baseline metrics for current citation frequency

- [ ] Schedule monthly audits to track improvement over time

LLM Optimization Audit Template

Technical Audit Components:

Page Performance Checklist:

□ Loading speed under 2.5 seconds

□ Mobile-friendly design and functionality

□ Clean HTML structure with semantic elements

□ Proper heading hierarchy (H1, H2, H3)

□ Schema markup implementation

□ Author and publication date visibility

□ Internal linking to related content

□ External citations to authoritative sources

Content Structure Assessment:

□ Clear, descriptive headings

□ Summary/key takeaways section

□ FAQ format where appropriate

□ Scannable paragraphs (2-3 sentences)

□ Bulleted lists and tables

□ Original data and insights

□ Recent examples and statistics

□ Natural, conversational language

Authority Evaluation:

- Domain authority score and backlink profile

- Author expertise and credential display

- Organization schema and brand entity information

- Citation practices and source credibility

- Content freshness and update frequency

Implementation Roadmap

Phase 1 (Months 1-2): Foundation Building

- Complete technical audit and fix critical issues

- Implement basic schema markup on priority pages

- Optimize content structure and formatting

- Establish monitoring and tracking systems

Phase 2 (Months 3-4): Content Enhancement

- Create comprehensive FAQ sections for key topics

- Develop original research and data assets

- Build topical authority through content clustering

- Strengthen author profiles and expertise signals

Phase 3 (Months 5-6): Scale and Optimize

- Expand schema implementation across entire site

- Launch community engagement and PR initiatives

- Develop advanced tracking and attribution methods

- Test and iterate based on performance data

Getting Professional Help: Consider hiring specialists for:

- Technical implementation: Schema markup, site speed optimization

- Content strategy: Topic clustering, authority building

- Monitoring setup: Advanced tracking and attribution systems

- Competitive analysis: Benchmarking against industry leaders

FAQ

What is LLM optimization?

Summary: LLM optimization is the practice of structuring and creating content so that AI models like ChatGPT, Gemini, and Perplexity can easily find, understand, and cite your content in their responses to user queries.

LLM optimization involves technical elements (schema markup, clean HTML), content formatting (FAQ structures, clear headings), and authority building (author credentials, citations) to improve your visibility in AI-generated answers.

How is GEO different from SEO?

GEO (Generative Engine Optimization) optimizes for AI model retrieval and citation, while traditional SEO focuses on search engine ranking algorithms. GEO emphasizes content structure and entity relationships, while SEO prioritizes keywords and backlinks. Both approaches complement each other in modern search strategies.

What schema should I use for LLMs?

Priority schema types for LLM optimization:

- FAQPage: For question-and-answer content sections

- Article: With author, publication date, and organization information

- Organization: Brand entity information and social profiles

- HowTo: For step-by-step procedural content

- Product/Service: For commercial pages with structured data

How do I troubleshoot LLM visibility issues?

Common fixes for poor AI retrieval:

- Check schema implementation: Validate markup using Google’s Rich Results Test

- Improve content structure: Add clear headings and FAQ formatting

- Update author information: Include credentials and expertise signals

- Enhance content freshness: Add recent statistics and update publication dates

- Fix technical issues: Ensure fast loading and mobile optimization

What tools help with LLM optimization?

Essential tool categories:

- Citation tracking: ChatGPT Rank Tracker, Profound, custom brand monitoring

- Schema validation: Google Rich Results Test, Schema.org validator

- Technical auditing: Screaming Frog, Sitebulb for crawling and analysis

- Analytics: GA4 setup for AI referrer tracking, Search Console integration

- Content optimization: AI-powered content analysis and optimization tools

Does LLM optimization replace traditional SEO?

No, LLM optimization complements traditional SEO. Many AI systems use traditional search infrastructure for content retrieval. Google’s AI Overviews, for example, often pull from high-ranking organic results. The best approach maintains existing SEO foundations while adding AI-specific optimizations.

How long does it take to see LLM optimization results?

Timeline varies by implementation scope:

- Technical fixes: 2-4 weeks for schema and structure improvements

- Content optimization: 6-8 weeks for new content to be indexed and recognized

- Authority building: 3-6 months for significant brand recognition improvements

- Competitive positioning: 6-12 months for sustained visibility advantages

What’s the ROI of LLM optimization?

ROI depends on your industry and implementation quality:

- B2B companies report 8-15% brand mention rates in AI responses

- E-commerce brands see 5-12% product citations in shopping queries

- Local services achieve 20-35% mention rates for location-specific queries

- Content publishers average 10-25% citation rates for informational topics

Calculate potential impact using: Search Volume × Mention Rate × Customer Value × Conversion Rate

Glossary

Answer Engine Optimization (AEO): Optimization strategies focused on getting content featured in direct answer formats across search platforms, including both traditional featured snippets and AI-generated responses.

Chunk-level Optimization: The practice of optimizing individual content sections (typically 150-300 words) for AI extraction, ensuring each chunk can stand alone as a complete answer.

Entity Consistency: Maintaining aligned brand mentions, names, and descriptions across all digital properties to strengthen AI model understanding of your organization.

Generative Engine Optimization (GEO): The comprehensive practice of optimizing content ecosystems for AI-powered search engines that generate synthesized answers rather than displaying ranked links. [Source: AI all purpose, 2025]

Large Language Model (LLM): AI systems trained on vast amounts of text data that can understand and generate human-like responses, including ChatGPT, Gemini, Claude, and Perplexity.

LLM Optimization (LLMO): The specific techniques and strategies used to make content more discoverable and citable by large language models in their generated responses.

Query Fan-Out: The process by which AI systems expand a single user query into multiple related sub-queries to gather comprehensive information for response generation. [Source: AI all purpose, 2025]

Retrieval-Augmented Generation (RAG): A technique that combines AI text generation capabilities with real-time information retrieval from external sources, enabling more accurate and current responses.

Semantic Clarity: The degree to which content clearly expresses its meaning and context in ways that AI models can easily understand and extract.

Share of Voice (SOV): In LLM optimization, the percentage of relevant AI responses that mention or cite your brand compared to competitors.

Vector Database: A specialized database that stores content as mathematical vectors (embeddings) representing semantic meaning, enabling AI models to find conceptually related information.

Zero-Click Search: Search behavior where users get their answers directly from AI responses without clicking through to source websites, representing a fundamental shift in search interaction patterns.

Conclusion

The shift to AI-powered search represents the most significant change in information discovery since Google’s PageRank algorithm. Large language models aren’t just changing how people find information—they’re reshaping the entire concept of search visibility.

The window for competitive advantage is open now. While many businesses still focus exclusively on traditional SEO, early adopters of LLM optimization are capturing disproportionate share of voice in AI responses. Companies that implement structured content, build topical authority, and optimize for AI retrieval today will dominate tomorrow’s search landscape.